When security departments analyse a single incident, attackers’ algorithms can generate thousands of new, polymorphic threats in a split second. The digital arms race has entered a whole new level, where traditional, static defensive methods are becoming insufficient. The only effective answer is becoming the implementation of security measures that are as intelligent and uncompromising as the attack vectors themselves.

The end of digital peace

The cyber landscape has been dramatically transformed, as the World Economic Forum’s data emphatically confirms. The number of cyber attacks on organisations around the world has more than doubled in just four years, reaching nearly two thousand incidents per entity this year. This escalation is a worrying sign for the market as a whole, but smaller businesses have particular cause for concern. They are now reporting inadequate cyber resilience many times more often than was the case just a few decades ago. The surge in threats is not a coincidence, but a direct by-product of the increasing democratisation of artificial intelligence, which has become an extremely powerful tool in the hands of cybercriminals.

The anatomy of an automated attack and the phenomenon of Shadow AI

Modern hacking campaigns have dramatically increased in scale and sophistication. Large language models are being used en masse to create deceptively realistic phishing campaigns, write self-modifying malware and automate social engineering attacks. What is emerging is a threat situation that can learn, adapt and scale in real time, reacting much faster than the operational procedures of a classic security centre would allow.

However, this phenomenon also has a second, much more subtle face, located within organisations themselves. We are talking about Shadow AI, i.e. the phenomenon of employees using generative artificial intelligence outside of official supervision. According to market reports, the vast majority of companies have applications based on generative algorithms running within their structures, the vast majority of which operate in the so-called grey IT zone. Entering sensitive financial data, analysing customer information or optimising code with untested tools means that every unmonitored query can be a potential leak of sensitive information. Internal analyses indicate that almost half of AI-related network traffic contains extremely valuable company data.

The soft underbelly of algorithms

Artificial intelligence architectures have specific security vulnerabilities at each of their layers. Understanding these vulnerabilities is absolutely fundamental to building an effective protection strategy. At the environmental level, responsible for computing power, the risks resemble those known from classical infrastructure, but the complexity of the workloads makes possible anomalies extremely difficult to detect.

The real challenges start higher up, at the level of the model itself. This is where sophisticated manipulation, such as query injection and silently exfiltrating data, takes place, as reflected in industry security standards such as the OWASP Top 10 guidelines for large language model-based applications envisaged for 2025. The context layer, where databases for augmented generation architectures are located, is also an extremely vulnerable point. These collections, which often contain a company’s most unique know-how, have now become a priority target for theft.

A new defence paradigm

The area of potential attack is growing every month, forcing a complete revision of existing doctrines. Rejecting innovation and attempting to block access to modern tools is a path that means a rapid loss of market advantage. The real solution is to engage artificial intelligence to protect artificial intelligence itself. This concept requires a holistic approach that encompasses the entire life cycle of algorithms from the design stage itself. Integrating control mechanisms into business processes from day one ensures high-performance protection with minimal delay.

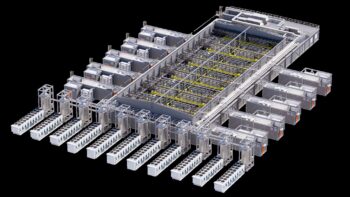

The latest generation of security systems uses sophisticated multi-agent models to analyse billions of events every day. Narrowly specialised algorithms filter out information noise, while larger units identify previously unknown attack patterns in real time. This creates a highly automated analytics pipeline that, by its structure, resembles the tools used by hackers themselves, except that it serves only defensive purposes. This paradigm shift can clearly be seen in the investment strategies of the largest players in the market, where intelligent security systems are at the very top of the boards’ agendas, as indicated by research on digital trust for the coming years.

The synergy of man and machine

Artificial intelligence is not the antagonist in the corporate security story. The same sophisticated mathematical models that enable precision strikes can guarantee organisations unprecedented levels of protection. However, building an effective ecosystem goes beyond technology purchases alone.

Equally important is proper staff preparation and building an architecture based on continuous verification. The future of security relies on the skilful combination of machine computing power with the critical judgement of analysts and data engineers. True business resilience in the years to come will come from the confidence that the systems driving enterprise growth are constantly evolving and learning to protect their own assets every day.